How to Optimize Your WordPress Site Robots.txt for SEO?

Robots.txt is also known as the main folder of your site. It is a text file in your website that can be created in which the owners or the admin users of your site can direct the search engine to crawl and index the pages or posts on their site. Do you want to learn ‘How to Optimize Your WordPress Site Robots.txt for SEO?’

What is Robots.txt for SEO?

Robotx.txt is considered a useful SEO tool that directs search engines on how to crawl your website. It is stored in the root directory (known as the main folder ) of your WordPress website. Its basic format somehow looks similar to this.

User-Agent: *

Allow: /wp-content/uploads/

Disallow: /wp-content/plugins/

Disallow: /wp-admin/

Sitemap: https://example.com/sitemap_index.xml

Why Optimize Your WordPress Site Robots.txt for SEO?

You need Robots.txt to tell search engines which pages or folders shouldn’t be crawled. If you don’t have it, the search engine will still crawl and index your website. It doesn’t affect you a lot to your site. But, when your website keeps on growing and you have lots of content on your site, it becomes unwantedly compulsory for your site too.

Anyway, it can’t be considered the safest method to hide your content or posts from traffic or users. But, it will help you to hide content from the search result.

There are different ways to Optimize Your WordPress Site Robots.txt for SEO. Today, we will be doing this by using Yoast SEO. Learn to Install Yoast SEO on your WordPress site.

Optimizing WordPress Site Robots.txt for SEO

Once you’re done Installing and Activating Yoast SEO,

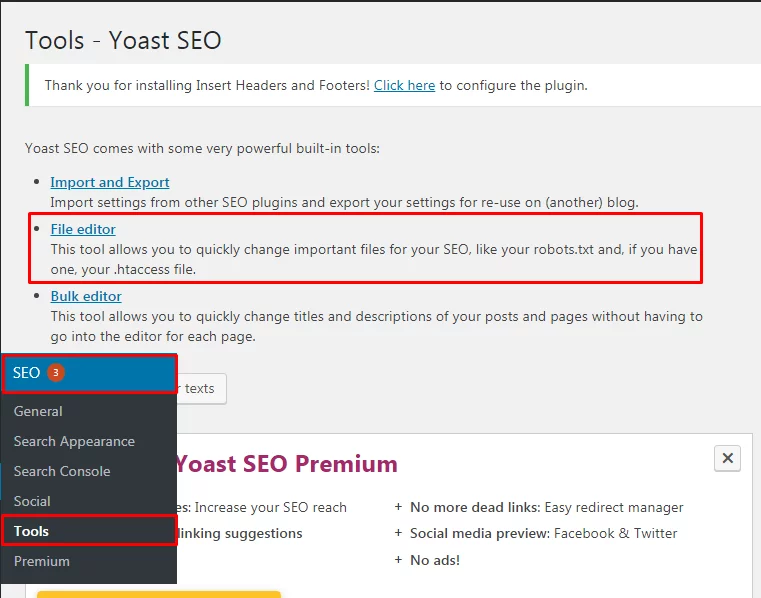

- Click on Yoast SEO>>Tools page in your WordPress admin and click on the File Editor link.

- Click on the File Editor that appears on your page.

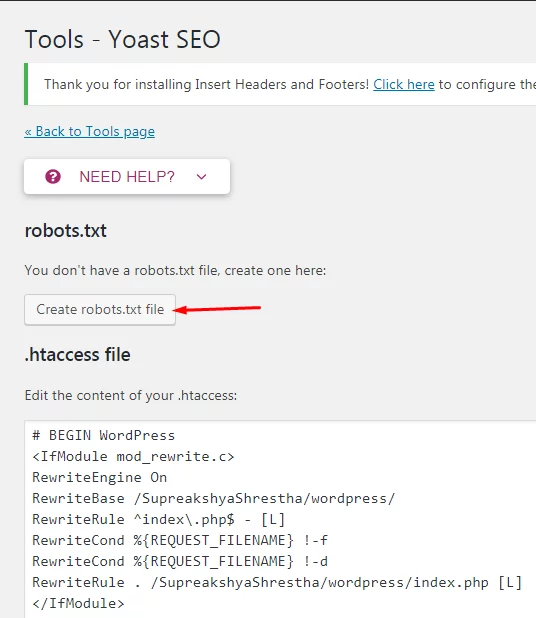

- After you click on the File editor, you can see your robots.txt file on your next page headed

- Click on Create a robots.txt file

By default, your WordPress site adds the rules to your robots.txt file.

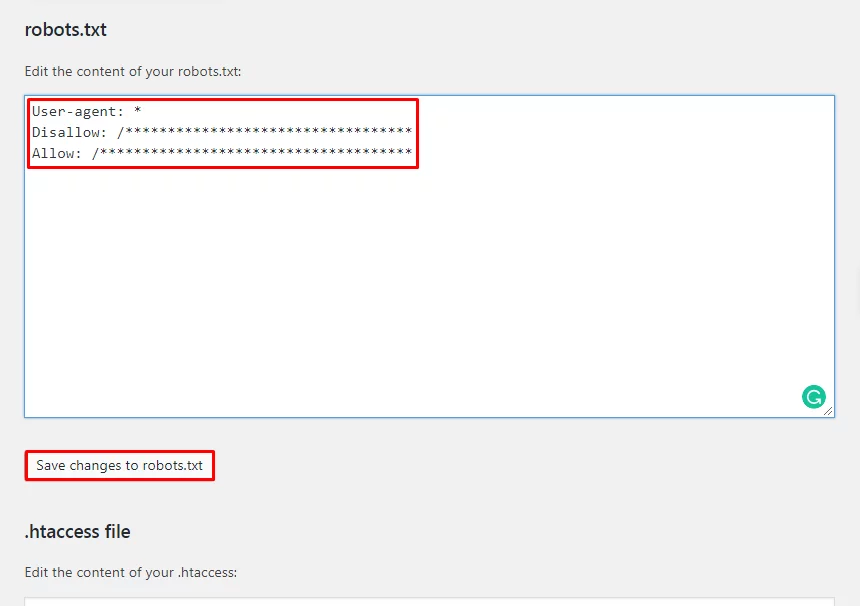

It is necessary to remove the text as it stops all the search engines from crawling your website.

- Delete or Erase the text written

- Go ahead and add your own robots.txt rules

- Click on Save Changes to robotx.txt to save your changes.

You have made your changes in the Robots.txt file and now you can only crawl the content or pages as per your wish.

How can we Test Your Robots.txt File?

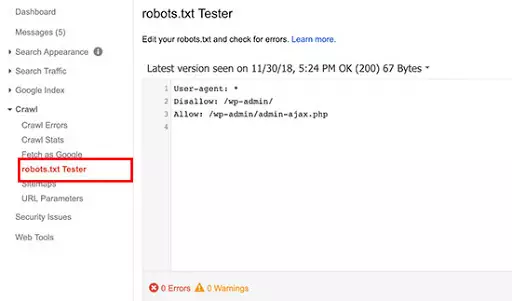

Once you are done, you can always check for its surety. There are different ways of checking the robots.txt file. Anyway using Google Search Console can be an easier way of doing it.

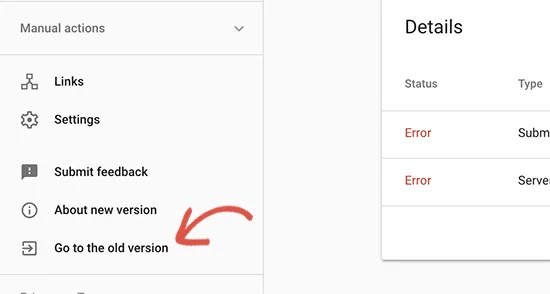

- Login to your Google Search Console account

- Switch to the old Google search console website.

- Launch the robots.txt tester tool located under the ‘Crawl’ menu.

You now automatically fetch your website’s robots.txt file and highlight the errors and warnings if it found any.

Wrapping Up:

In this tutorial, we learned How to Optimize Your WordPress Site Robotx.txt for SEO. It has a certain sort of importance in your WordPress site as previously mentioned. We have briefly explained your method and idea to add it to your site too. If you have any confusion then please let us know.